Ayatori: An Experimental Agent Orchestration Engine in Clojure

An experiment in graph-based, capability-driven agent orchestration engine

This started as a simple question: what is an agent framework actually doing?

A few months ago I built a minimal agent engine from scratch in Clojure to find out. That engine used a graph model: nodes were pure functions, edges defined routing, a loop drove execution. The short answer was that it is a recursive loop, some JSON parsing, and a state machine. That article is here if you want the details.

But once you strip the agent down to its core, a different question surfaces. If agents can call other agents, how does authority move between them? That led me back to Spritely Goblins, which I already knew. OCapN came from there. When I tried to interpret those ideas in a cloud-native context, I ran into Biscuit tokens. I wrote about reconstructing Biscuit in Clojure. The result was kex, a minimal proof of concept.

Ayatori is where those two threads converge. The graph model from the first experiment, combined with a capability-based design for agent composition and authority.

What Ayatori is

Ayatori is an experimental graph-based AI agent orchestration engine built in Clojure. Nodes are mostly pure functions. Edges define routing. The executor handles the rest.

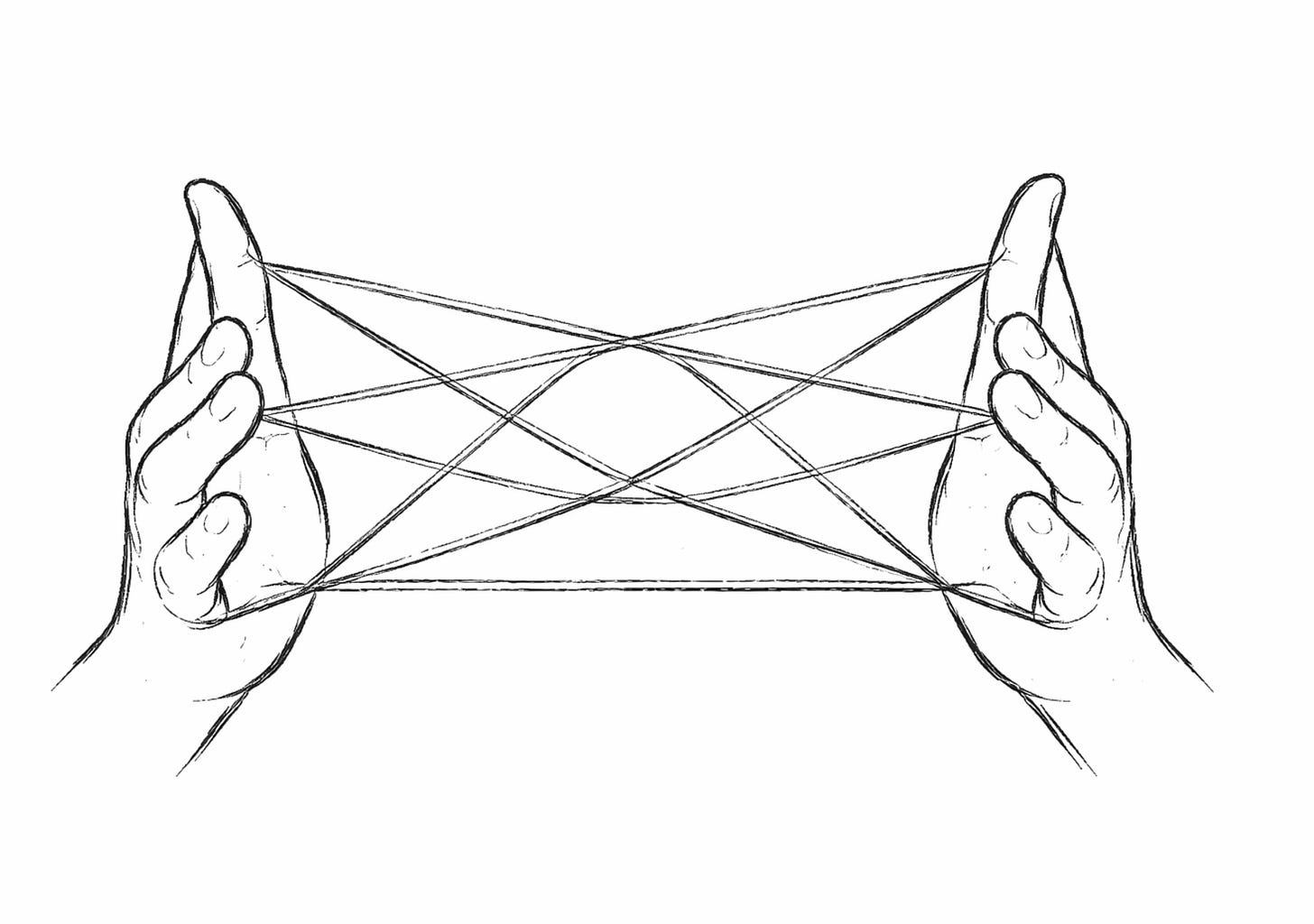

The name comes from ayatori, the Japanese string figure game known in English as cat’s cradle. Agents passing capabilities to each other, each move shaping what comes next. The attenuation and delegation that constrains those moves will come with kex integration.

It is currently a proof of concept, under active development. Not production-ready.

The core model

An agent is a graph. You define nodes and edges, declare what capabilities it exposes and what dependencies it needs, and the system wires everything together at start time.

deps -> agent(nodes, edges) -> capsNodes can be pure functions, stateful handlers, LLM nodes, fan-out nodes, or nested agent nodes. The simplest case is a pure function: takes input, returns output. Routing is data: edges are keywords for unconditional routing, or maps for conditional routing based on a :route key the node returns. The executor handles dispatch, state, and middleware.

A simple example. An LLM agent with tool calling and structured output:

(def search-tool

{:name "search"

:description "Search the order database"

:schema [:map [:query :string]]

:handler (fn [{:keys [query]}]

(println "searching for:" query)

[{:id 10258 :date "2026-03-20" :customer "Jane Doe" :total 219.47}

{:id 10257 :date "2026-03-19" :customer "John Doe" :total 134.00}

{:id 10256 :date "2026-03-18" :customer "John Jr." :total 87.39}])})

(def assistant

(aya/make-agent

{:nodes {:llm {:type :llm

:client {:provider :ollama

:model "gpt-oss:20b"

:base-url "http://localhost:11434"}

:prompt "You are a helpful assistant."

:tools [search-tool]

:response-format {:type :json-schema

:schema [:map

[:answer :string]

[:order-count :int]]

:max-retries 2}}

:search (:handler search-tool)}

:edges {:llm {:done :ayatori/done

:search :search}

:search :llm}

:caps {:chat {:entry :llm

:input [:map [:content :string]]

:output [:map [:answer :string] [:order-count :int]]}}}))

(def sys (-> (aya/make-system {:agents {:assistant assistant}

:middleware [(mw/make-tap)]})

aya/start!))

(async/<!! (aya/run sys :assistant :chat {:content "Find recent orders"}))

;; => {:answer "Here are the most recent orders: ..." :order-count 3}Tool calls route through graph nodes. Middleware observes every step. :response-format enforces output schema with self-healing retries via :max-retries if the LLM returns invalid output.

Agents expose caps and declare deps. The system wires deps to caps at start time. An agent can use another agent as a node.

(def doubler

(aya/make-agent

{:nodes {:dbl (fn [input] {:doubled (* 2 (:n input))})}

:edges {}

:caps {:main {:entry :dbl}}}))

(def caller

(aya/make-agent

{:nodes {:prep (fn [input] {:n (:v input)})}

:edges {:prep :compute}

:deps [:compute]

:caps {:main {:entry :prep}}}))

(def sys (-> (aya/make-system

{:agents {:caller caller :doubler doubler}

:wiring {:caller {:compute [:doubler :main]}}})

aya/start!))

(async/<!! (aya/run sys :caller :main {:v 5}))

;; => {:doubled 10}The inner agent runs with its own execution scope but shares the same store and trace-id. Caps can carry Malli schemas for input and output validation. rewire! changes dep targets at runtime without restarting the system.

Where kex comes in

Right now, capability security is structural. Possessing a CapHandle is sufficient to invoke it. There is no cryptographic verification.

When kex is integrated, cross-node calls will carry token chains. Each delegation can only narrow the original capability, never expand it. An agent receiving a delegated capability cannot do more than what it was given. This is still on the roadmap.

The source code is available here: https://github.com/serefayar/ayatori

What is missing

Ayatori is a local prototype. No distributed execution yet. CapHandles cannot be serialized or sent across the wire. The middleware dispatch is synchronous. Observability covers individual runs but not topology visualization. Time-based timeout is not implemented, though :max-steps (default 100) provides step-based protection against infinite loops.

These are the next problems to solve.

Multi-node execution is a harder problem. When an agent on one node calls an agent on another and expects a result back, the continuation has to survive the network boundary. Supervision, timeout handling, and meaningful error propagation across agent boundaries are in the same category. Things I want to tackle, but will take time.

Why I built this

My day-to-day work has not involved writing production code for a while. I try to stay hands-on anyway. Clojure is what I reach for when I want to think through a problem with code.

When I started exploring agentic systems, I kept building things to understand them. The minimal agent engine was one experiment. Kex was another. Ayatori is where the two ideas merged into something more structured.

It is an experiment, not a product. The code is available on GitHub if you want to read it, run it, or point out what is wrong with it.

I will keep working on it when I have time. Distributed execution and kex integration are the next things I want to tackle. No timeline.